OPTIMAL STRUCTURED LIGHT A LA CARTE

Parsa Mirdehghan, Wenzheng Chen, Kyros Kutulakos

CVPR 2018 Spotlight

Abstract

We consider the problem of automatically generating sequences of structured-light patterns for active stereo triangulation of a static scene. Unlike existing approaches that use predetermined patterns and reconstruction algorithms

tied to them, we generate patterns on the fly in response

to generic specifications: number of patterns, projector-camera arrangement, workspace constraints, spatial frequency content, etc. Our pattern sequences are specifically optimized to minimize the expected rate of correspondence errors under those specifications for an unknown scene, and

are coupled to a sequence-independent algorithm for per-pixel disparity estimation. To achieve this, we derive an

objective function that is easy to optimize and follows from

first principles within a maximum-likelihood framework. By

minimizing it, we demonstrate automatic discovery of pattern sequences, in under three minutes on a laptop, that can

outperform state-of-the-art triangulation techniques.

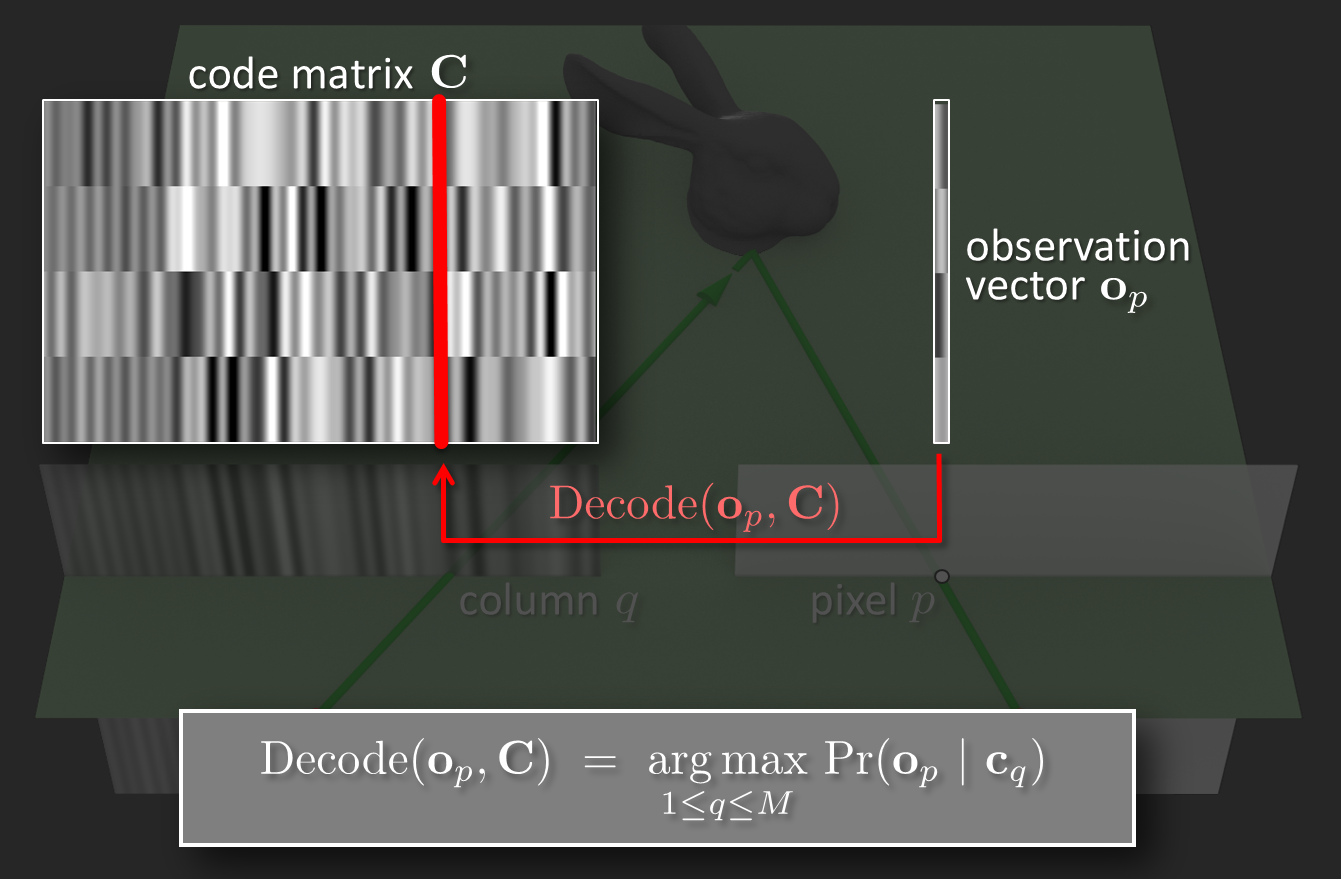

Generic Decoding Algorithm

We show that there is an extremely simple pattern-independent

algorithm for computing pixel-wise correspondences that is near optimal in a

maximum-likelihood sense. This is in contrast to all previous techniques,

which rely on specialized decoding algorithms that are designed for a

particular pattern sequence.

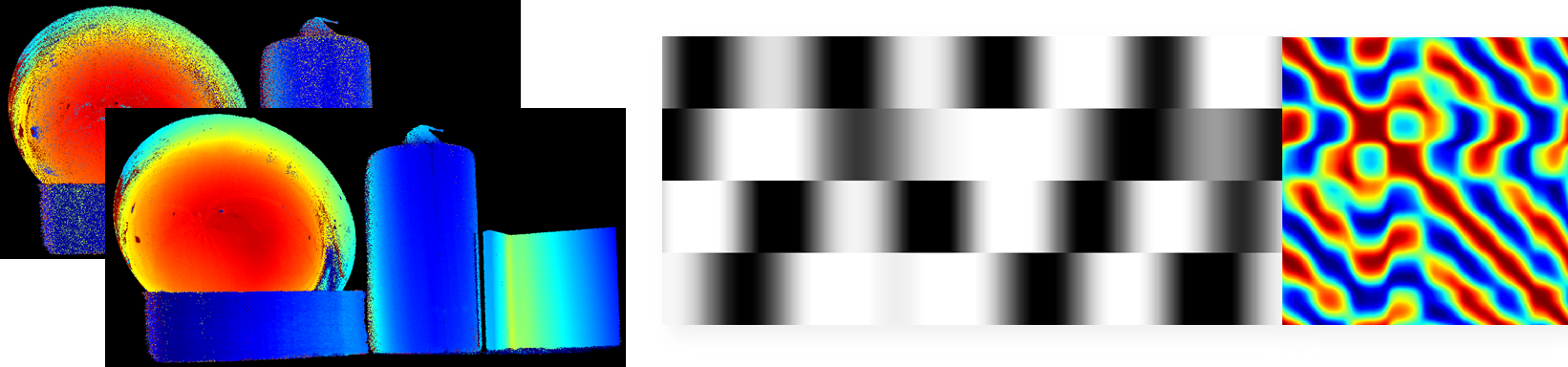

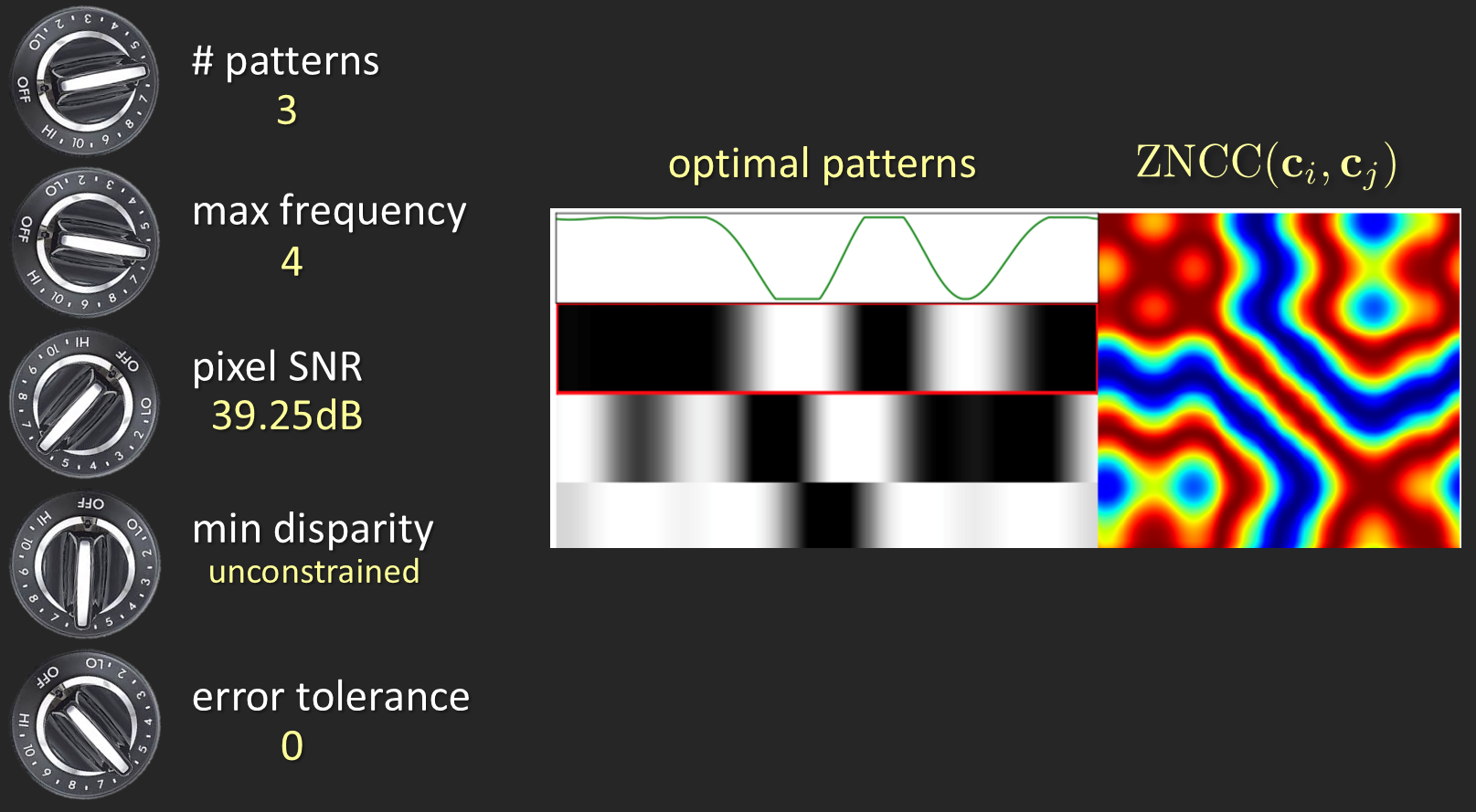

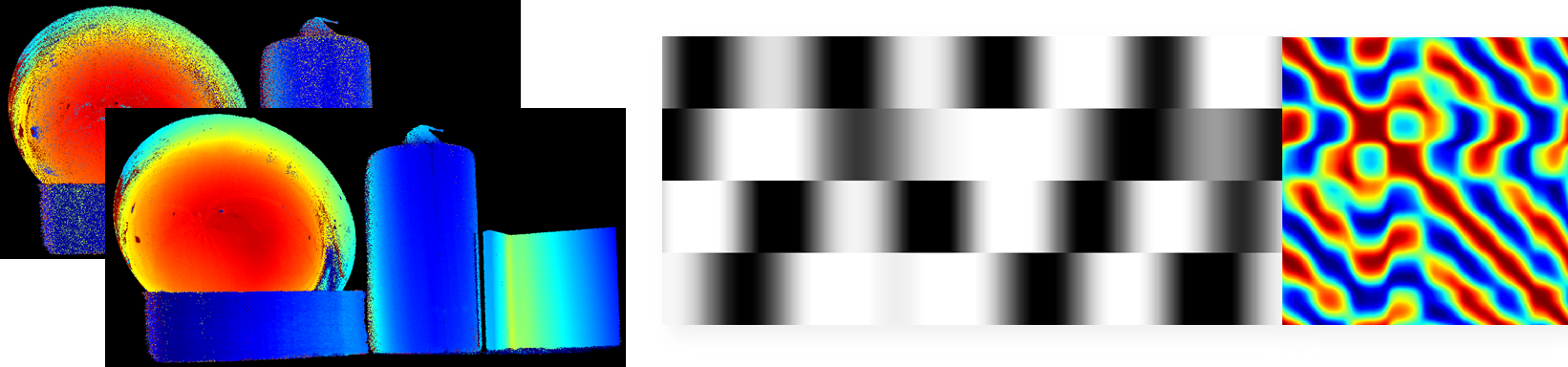

Optimal Projection Patterns

we derive an algorithm for computing pattern sequences on the

fly, that are optimized for a particular system and imaging conditions. Our

optimization is defined in a way that minimizes the expected number of decoding error.

What this means is that rather than proposing yet another sequence of patterns for structured light, we provide an algorithm.

The algorithm takes as input a set of user specs, including the number of

projector and camera pixels, the desired number of patterns, the specific camera and projector arrangement, a bounding volume that contains the objects to be scanned, any desired bounds on the patterns' spatial frequency content,

and possibly many other specifications;

and outputs a sequence of patterns that minimizes the expected number of stereo correspondence errors under those specified conditions. We call this paradigm Optimal Structured Light á La Carte

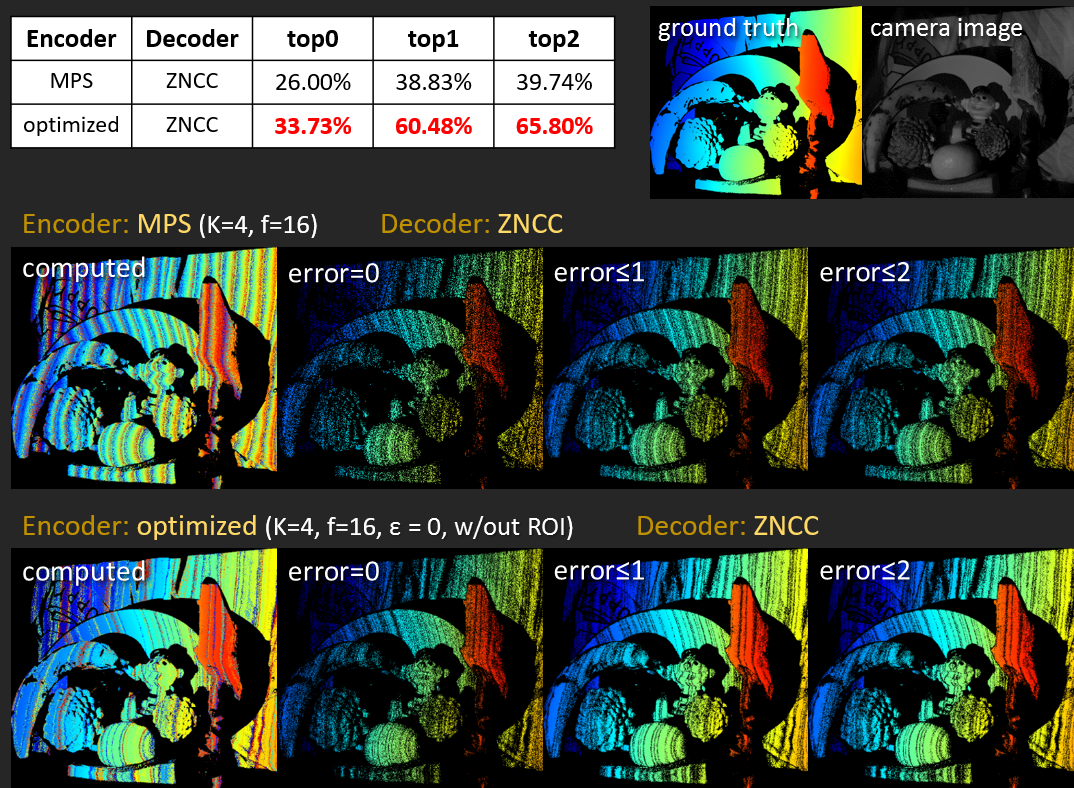

Improved Accuracy and Robustness

In practice, our qualitative experiments show that these optimized patterns outperform the state-of-the art techniques such as Micro Phase Shifting and Embedded Phase Shifting, particularly on the hardest cases (e.g. low number of patterns, low-SNR conditions, and scenes with defocus/indirect light).

Our approach results in depth maps with much fewer outliers. Also, the quantitative results show that we achieve higher accuracy in ground-truth experiments.

Resources

Paper

Supplemental Material

Additional Experimental Results

Spotlight Presentation

Poster

For code and sample patterns, please contact Parsa Mirdehghan (parsa[at]cs[dot]toronto[dot]edu)