Gaussian Process Dynamical Models for Human Motion

Abstract

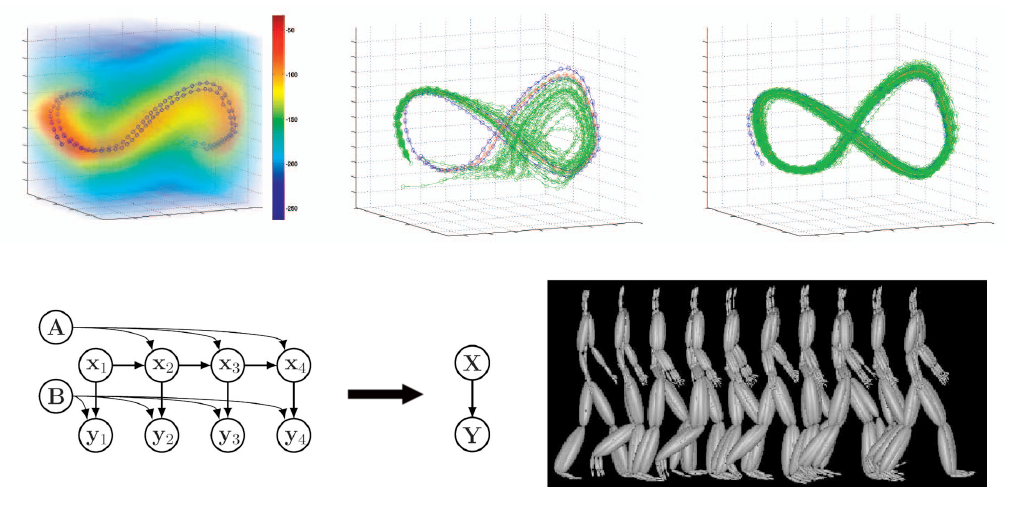

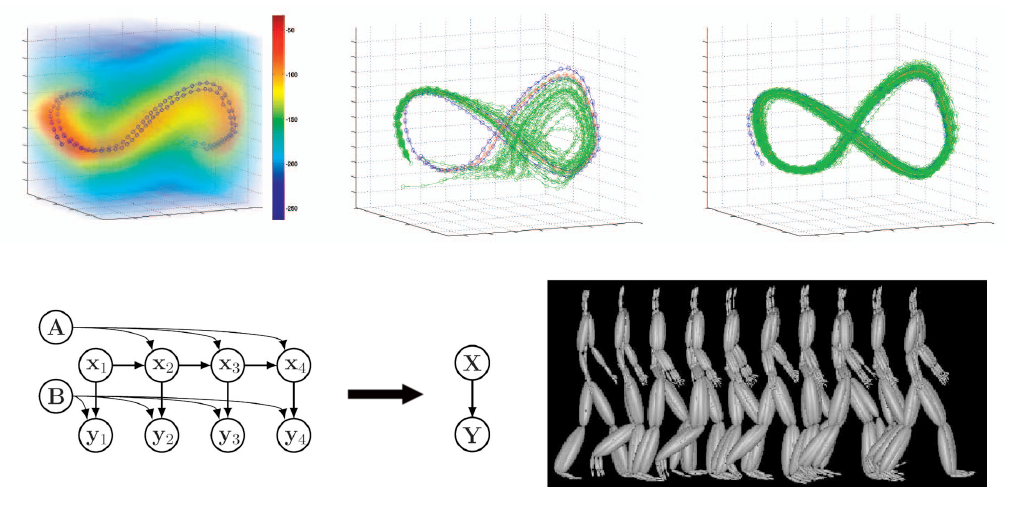

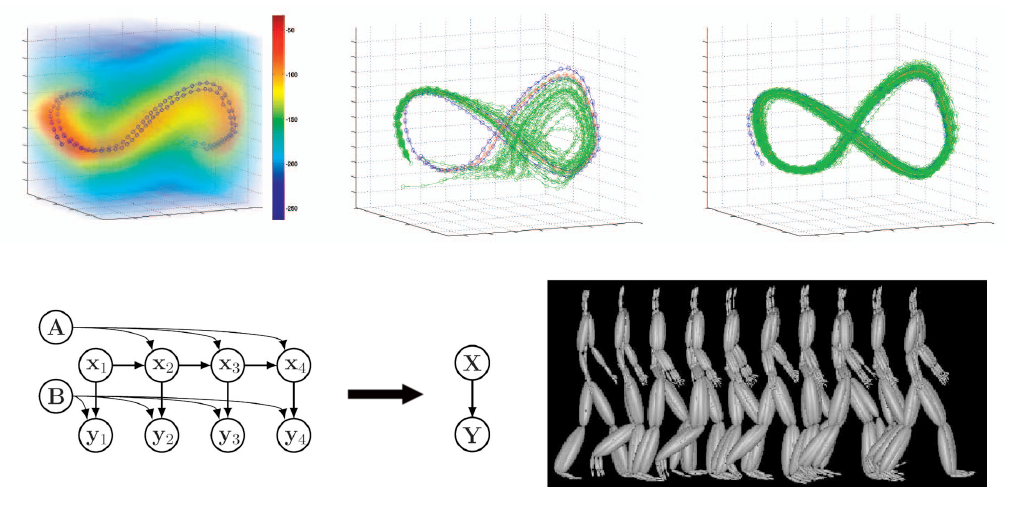

We introduce Gaussian process dynamical models (GPDMs)

for nonlinear time series analysis, with applications to learning

models of human pose and motion from high-dimensional motion capture

data. A GPDM is a latent variable model. It comprises a low dimensional

latent space with associated dynamics, as well as a map from the latent

space to an observation space. We marginalize out the model parameters

in closed form by using Gaussian process priors for both the dynamical

and the observation mappings. This results in a nonparametric model for

dynamical systems that accounts for uncertainty in the model. We

demonstrate the approach and compare four learning algorithms on human

motion capture data, in which each pose is 50-dimensional. Despite the

use of small data sets, the GPDM learns an effective representation of

the nonlinear dynamics in these spaces.

People

Jack M. Wang

David J. Fleet

Aaron Hertzmann

Papers

Wang, J. M., Fleet, D. J., Hertzmann, A. Gaussian Process Dynamical Models for Human Motion. In IEEE Transactions on Pattern Recognition and Machine Intelligence. February, 2008. pp. 283-298.

Errata: Figures 7 and 8 on page 292 are incorrectly printed, please find the corrected figures appended to the end of the pdf.

Note: Over the years, a few people have asked me about how Equation (10) is derived.

Wang, J. M., Fleet, D. J., Hertzmann, A. Gaussian Process Dynamical Models. In Proc. NIPS 2005. December, 2005. Vancouver, Canada. pp. 1441-1448.

Software

A version of this work has been implemented by Neil Lawrence as an extension to his GP-LVM software packages. Visit his Gaussian process software page for downloading information.

The current version of our GPDM code, which includes code that generate HMC samples and other mocap utils, but are not nearly as organized as Neil's code.

Videos

Supplemental Video for PAMI

Pages below contain animated gifs that link to corresponding

QuickTime movies (some over 10 MB); jpegs link to higher-resolution jpeg

images.

3D GPDM

2D GPDM

Missing Data Demo Golf Demo

Acknowledgements

The authors would like to thank Neil Lawrence and Raquel Urtsasun for their comments on the manuscript, and Ryan Schmidt for assisting in producing the supplemental video. The volume rendering figures were generated using Joe Conti’s code on www.mathworks.com.

This project is funded in part by the Alfred P. Sloan Foundation,

Canadian Institute for Advanced Research, Canada Foundation for

Innovation, Microsoft Research, Natural Sciences and Engineering

Research Council (NSERC) Canada, and Ontario Ministry of Research and

Innovation. The data used in this project was obtained from mocap.cs.cmu.edu. The database was created with funding from the US National Science Foundation Grant EIA-0196217.