| Team Members |

|---|

| User Interface |

|---|

We wrote two programs to implement the View Morphing algorithm. The first program, called viewmorph, allows the user to interactively specify all the inputs required for the algorithm, and to preview the results. These inputs include the two images, the line correspondences, and the parameters for the postwarp.

The second program, called mkmorph, is a command-line application that takes the saved input generated using viewmorph and outputs a series of frames that can then be converted into a movie. We describe the process of creating a movie in a later section.

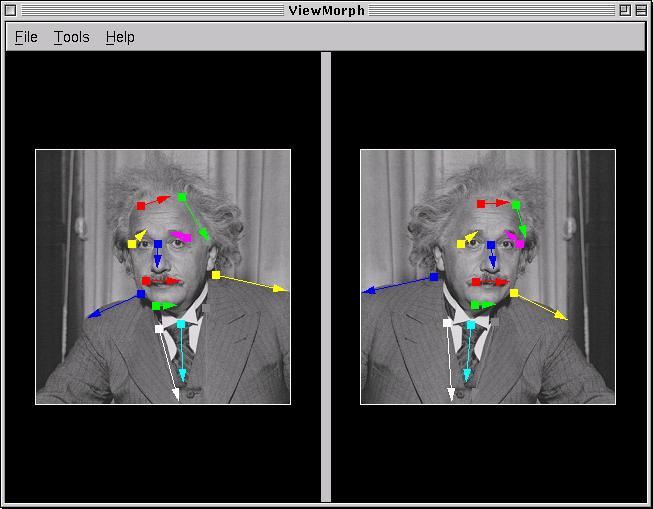

The main window of viewmorph is shown in Figure 1. Both panes are initially empty, and the user can use the menu to specify the two images. The left image must always be loaded from a file (in Vista format), but the the right image can optionally be specified to be the vertical flip of the left image. This feature is useful if only a single image of the scene is available, as is the case in Figure 1.

Once the two images have been specified, the user can draw corresponding directed line segments in the two images. Lines can be drawn in either image by left-clicking to specify the endpoints. When a line is specified in one image, a corresponding line will automatically appear in the same location in the other image. This will usually be in the wrong place, but the the user can relocate the endpoints of the line segments by right-clicking them and dragging. The corresponding lines are drawn in the same colour for better visualization. Lines can also be deleted by shift-left-clicking on either endpoint.

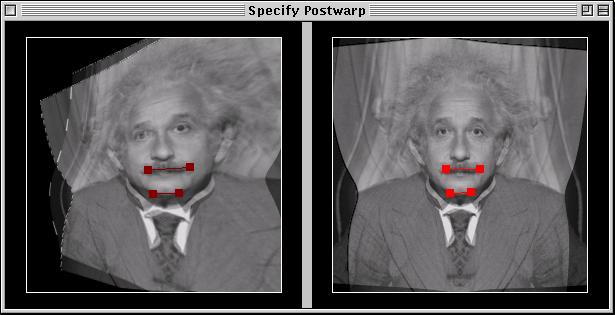

Before the image can be properly morphed, the user needs to specify the parameters for the postwarp. This is done as described by Seitz, by interactively specifying the positions of four control points throughout the entire morph. The control points are simply chosen to be the endpoints of the first two corresponding lines that were specified in the main window. We use three keyframes for the spline interpolation, the left image (t = 0), the halfway point (t = 0.5), and the right image (t = 1).

Figure 2 shows the Specify Warp window that can be accessed from the Tools|View-Based Morph menu. The left pane shows the two prewarped images morphed at the halfway point, and the right pane shows the two original images morphed at the halfway point. Linear interpolation is used to give a rough initial estimate of the positions of the control points in both panes. If the estimated positions of the control points do not give good results in the final view-morphed image, the user can move the control points in the right pane to make the required corrections.

After the postwarp has been fully specified, the user can open a View-Based Morph Preview window from the Tools|View-Based Morph menu, as shown in Figure 3. This windows lets the user move the slider at the bottom to display morphs at any value of t between 0 and 1.

The program is also capable of applying the basic Beier-Neely morphing algorithm without applying the prewarping or postwarping operations. This is quite useful for purposes of comparison with the view-based method. A similar preview window for the plain morph is shown in Figure 4.

All of the input parameters for the morph, namely the images, the line correspondences, and the postwarp parameters, can be saved into a .vm file from the File menu. The .vm file can be loaded into another session of viewmorph, or it can be used with mkmorph from the command-line to generate frames of a movie.

| Generating Movies |

|---|

The program mkmorph can be used to generate movies of morphs specified by .vm files. The program will produce a numbered sequence of image frames, as well as a parameter file compatible with the mpeg_encode utility from CS Berkeley. This utility generates a movie file in .mpeg format from a set of still pictures. The number of image frames produced can be specified on the command-line of mkmorph and this number is indirectly used to specify the smoothness and length of the animation (we generate movies with 30 frames per second).

| Beier-Neely Morphing |

|---|

We implemented a straightforward variant of Beier-Neely morphing. In this image morphing technique, manually selected corresponding features in the images control the morph by weighting pixels based on their relative positions with respect to the features. In our case, the features used for Beier-Neely morphing (points and lines) are the same features used to determine the stereo correspondence.

We simplified the weighting function used in the morph to homogenize the treatment of both points and lines. The parameters of the morph were hidden from the user, because we found that the quality of the morph was relatively insensitive to the choice of these parameters. The final weighting function that we hardcoded was 1/(0.1+d2), where d is the distance of the pixel to the feature. Note that the Beier-Neely method is global, in that all features must be considered for all pixels, which makes it somewhat inefficient.

| Calculating the Prewarp |

|---|

The first step in calculating the prewarp is estimating the fundamental matrix which relates corresponding image points. To do this, we use a modified version of the eight-point algorithm, as described by Hartley, that involves first transforming and scaling the input points for better numerical conditioning. We solve a constrained linear system of the form Ah=0 with norm(h)=1, using the well-known fact that this is equivalent to finding the eigenvector of ATA with zero eigenvalue. To find this eigenvector robustly, we employ singular value decomposition.

We then rectify the images by finding homographies, H0 and H1, that transform the images onto the same plane so that their scanlines are aligned. We follow the method described in the appendix of Seitz's Ph.D. thesis, which involves (1) choosing the common plane indirectly, (2) composing a series of rotations, and then (3) applying a scaling and translation to bring the images on the common plane into alignment.

Under certain conditions, such as when the objects being view-morphed are considerably different, Seitz warns "it is advisable to leave out the prewarp entirely, since its automatic computation becomes less stable." We have encountered this instability in practice, especially when the viewpoints are far apart or the second image does not represent a true novel 3D viewpoint of the object in the first image.

| Calculating the Postwarp |

|---|

Our interface allows the user to specify the parameters for the postwarp, as described in Seitz's paper, by selecting the positions of four control points at three different keyframes. For any in-between frame, the control points are interpolated using a spline fitting operation. We can then uniquely determine the postwarp homography, HS, by solving a constrained linear system, much in the same way that we solved for the fundamental matrix. The postwarp homography will let us transform morphed, prewarped images into the final view-morphed images of the object.

For better image quality, to avoid the errors introduced by resampling, we fold the prewarping, morphing, and postwarping into a single operation applied on per-pixel level. This is possible because we perfrom backwards warping and our implementation of the Beier-Neely morphing algorithm also uses a backwards mapping.

| Implementation |

|---|

We found that our implementation of the view-morphing algorithm suffered from instability issues. The correspondences must be specified very carefuly in order to get good results.

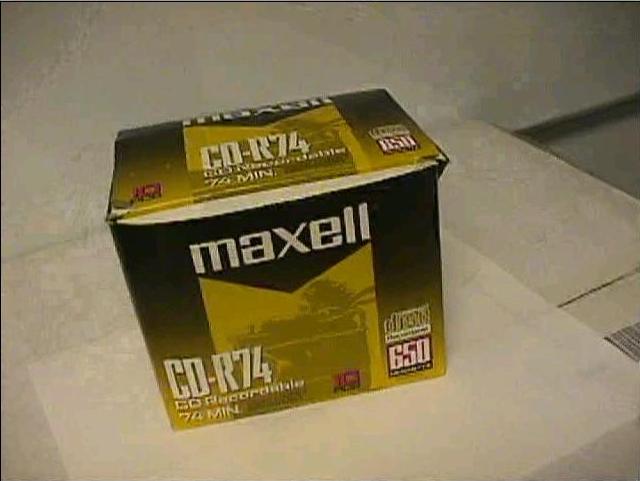

The ghosting effects in our results can be significant because of folds, in which visible surfaces the original images become occluded in in-between images. Seitz suggests using a Z-buffer technique based on estimated point disparities to help account for folds. With regard to our morphing framework, Beier and Neely describe a similar problem calling for a solution they call "ghostbusting." They offer several alternative solutions, including shrinking or splitting line features that have come too close. These ghosting artifacts are especially visible in our Maxell CD-R box sequence.

Holes, on the other hand, are plausibly filled in by the bilinear interpolation used in our Beier-Neely morphing routine. This interpolation can be invalid if the hole is large, but in our test cases this did not seem to be a problem.

We also found that our implementation was very sensitive to incorrectly positioned correspondences. Adding one extra line feature in the wrong place could destroy the whole morph and produce awful results. We believe this is caused by unstable calculations leading to a poor estimation of the fundamental matrix. This was especially evident in one example where both epipoles were estimated to be close to (1,0,0). This implied that the two images were already in the same plane, but for our example, this was definitely not the case. The values of p1TFp0, which should be identically 0 for corresponding points, could be rather small (less than 0.1), and still produce bad results.

To achieve better robustness, we could have taken a variety of measures. One approach would be implementing a RANSAC-style procedure for estimating the fudamental matrix by considering random subsets of the lines, in order to cope with outliers. Our correspondences could only be specified to a precision of 1-3 pixels using our interface, which makes matching features somewhat difficult. We would have really liked to implement a zooming feature to allow the user to specify correspondences at sub-pixel accuracy.

Of course, if the images do not represent true different 3D viewpoints of the same object (for example, there was a change of facial expression, or some object motion, or the two objects are actually different) then accuracy in the correspondences would be lost anyhow. Seitz recommends leaving out the prewarp entirely in this case, and just doing a plain image morph, manipulating the in-between images using whatever "postwarp" homography is necessary to make the results look acceptable. We didn't do this exactly, but we did compare our view-based morphing results to plain image morphing.

Finally, we found that we spent too much time working on infrastructure issues that distracted us from the real problem. Much of the core code would have been developed much faster in Matlab, for example.

| Results |

|---|

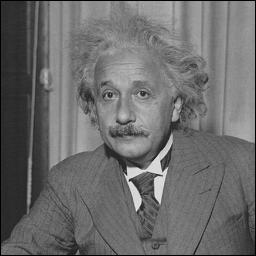

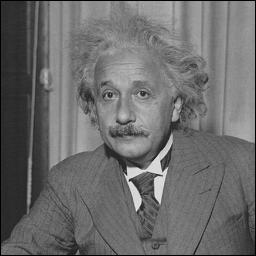

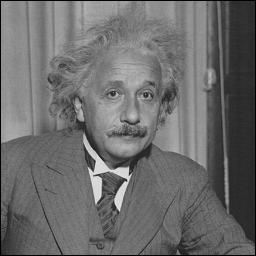

One of our test cases was an image of Albert Einstein that we flipped vertically to obtain a second image, and we are pleased with the result. However, we notice that the view-based morph is practically indistinguishable from the plain Beier-Neely morph.

Notice that there is some 2D image contraction near the middle of the movie. This is due to the viewpoints being far apart, and the effect can be corrected by manipulating the postwarp. Our interface for specifying the postwarp, however, can be counter-intuitive in cases like this. It is important to remember is that the control points should be placed in the location where we would like them to appear in the final image, which is not always close to the morphed approximation given.

We took an image of the Mona Lisa from Seitz's web site to see how our implementation compares with his. The biggest difference we noticed was a greater amount of ghosting in our version, but adding more correspondences helped remedy this problem. We have included two versions of the view-morphed Mona Lisa. The first movie was produced with 10 corresponding lines, while the second was produced with 23 lines. The second movie suffers much less from ghosting and we believe that if even more care and effort were taken in providing more correspondences, we could obtain quality much closer to Seitz's.

We took two pictures of a Maxell CD-R box from two different viewpoints. Here we can see very bad ghosting. As mentioned in the implementation section, this is probably due to the fact that we did not implement either of the "ghostbusting" techniques described by Seitz and Beier-Neely. However, closer examination of the final frames of both movies shows that the view-morphing approach suffers from much less distortion of the ghosted portion.

It is also instructive to look at the prewarped images before they are morphed. These are shown below for the Maxell Box images. If the fundamental matrix were estimated accurately, we would expect corresponding scanlines to be aligned vertically, however the scanline alignment in this example is especially poor for the lower left-hand corner. Also note that since an entire side of the box was not visible from one viewpoint, no correspondences could be established in this area, contributing to the ghosting visible in the movie.

Here is another example of a view-morph on a real face from two different viewpoints. Notice how the view-based version gives a better illusion of 3D than the plain morphed version.

| Conclusions |

|---|

We were very pleased with the Beier-Neely morphing technique. It provides some very good results and is quite stable. It is easy to see directly how adding correspondences improve the quality of the morph. The view-based morphing technqiue, on the other hand, disappointed us a bit, as it did not seem to provide much improvement over plain image morphing in our test cases. In certain cases, where we don't want straight lines to be bent in intermediate frames, for example, we expect that the view-morphing technique will produce more realistic-looking results. Even in some of our test cases we noticed a more realistic 3D effect given by the view-morph, but this was perhaps our over-active desire to see the algorithm working. In general, the extra effort required to implement the view-morphing technique did not give us proportionally better results, and so we were left a little disappointed.

Our problems with the view-morphing technique were most likely due to our particular implementation of calculating the fundamental matrix. If we had more time, we would have liked to implement zooming so that we could specify correspondences on a sub-pixel level, as well as explore other rectification methods like the one described by Loop and Zhang.

| References |

|---|