AniPaint: Interactive Painterly Animation From Video

|

|

|

|

People

Abstract

This paper presents an interactive system for creating painterly animations from video sequences. Previous approaches to painterly animation typically emphasize either purely automatic stroke synthesis or purely manual stroke keyframing. Our system supports a spectrum of interaction between these two approaches which allows the user more direct control over stroke synthesis. We introduce an approach for controlling the results of painterly animation: keyframed Control Strokes can affect automatic strokes placement, orientation, movement, and color. Furthermore, we introduce a new automatic synthesis algorithm that traces strokes though a video sequence in a greedy manner, but, instead of a vector field, uses an objective function to guide placement. This allows the method to capture fine details, respect region boundaries, and achieve greater temporal coherence than previous methods. All editing is performed with a WYSIWYG interface where the user can directly refine the animation. We demonstrate a variety of examples using both automatic and user-guided results, with a variety of styles and source videos.

Paper

Peter O'Donovan, Aaron Hertzmann, AniPaint: Interactive Painterly Animation From Video (Preprint), IEEE Transactions on Visualization and Computer Graphics (TVCG), March 2012. Vol. 18, No. 3. pages 475-487 BibTex | AppendixVideos

Supplementary Material

1024x768, 3000kbps (117MB) | 1280x960, 6000kbps (233MB)Comparison to Previous Work

1024x768, 3000kbps (48MB) | 1280x960, 6000kbps (95MB)If you need any of the source videos for research purposes, please let me know.

Code/Binaries

AniPaint is written in C++ with Qt 4.4 and was developed on Windows, though it should be cross-platform compile-able. It was built off Ce Liu's Human-Assisted Motion Annotation system. If you use this code for a publication, we ask that you cite the above paper.

Source: anipaint_src.zip

Test Projects: projects.zip

Windows Binaries: anipaint_bin.zip

Recommended hardware includes at least 2 gigs of RAM and a 2GHz processor. You do need a graphics card which supports frame buffer objects, so laptops or older machines may not work. Also note that this is research code and has bugs; saving often is recommended. Feel free to email me with questions, though I can't promise to provide any help.

I have also written a brief user guide for using the system.

Acknowledgements

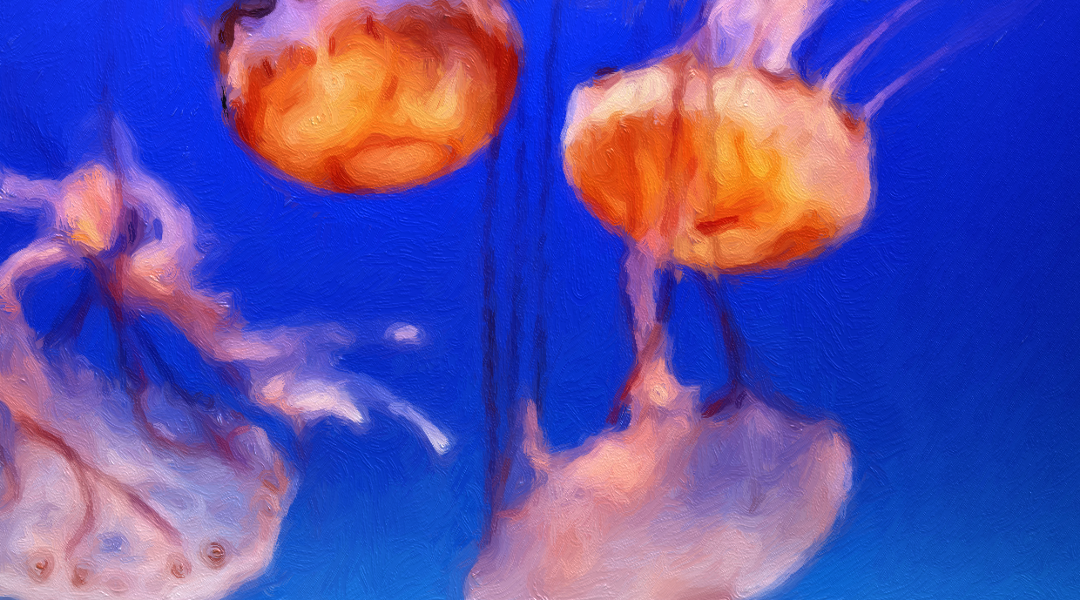

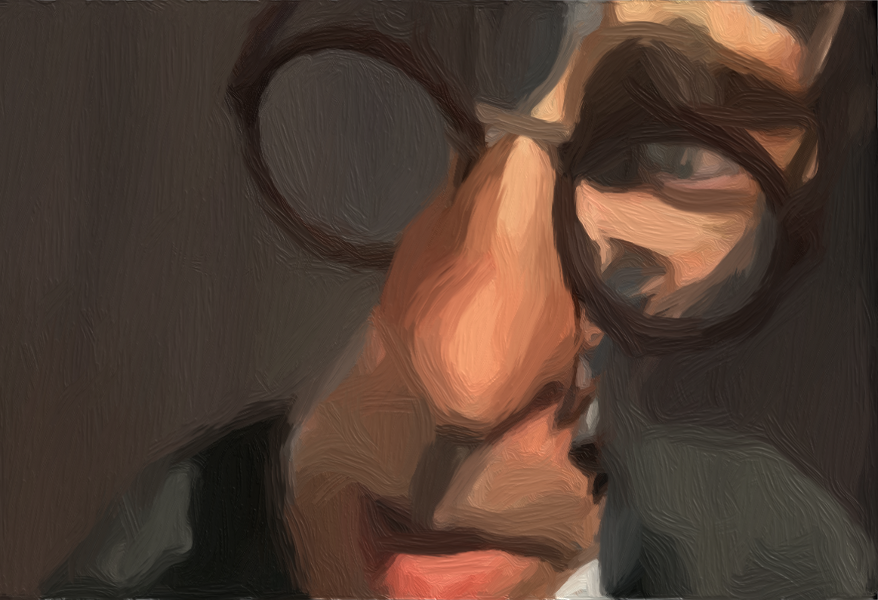

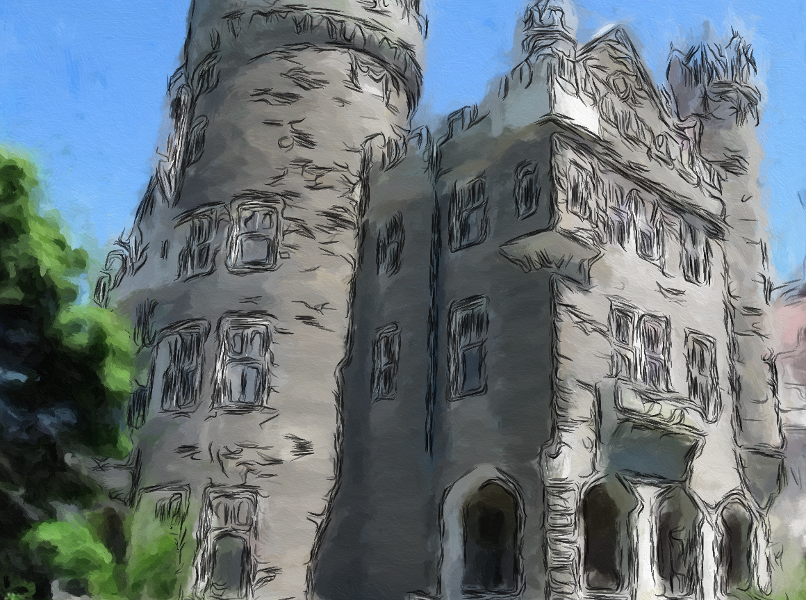

The authors would like to thank Simon Breslav, Igor Mordatch, Ryan Schmidt for their user testing and helpful feedback. We thank Ce Liu for his Human-Assisted Motion Annotation code. We thank Chris Landreth, Copperheart and the NFB for the clip from Ryan, and Liang Lin for the Lady video. We also thank the following individuals for their Creative Commons licensed source videos: Wen Zhang for the Pool Player video , Rick Cooper for the Horses video, Eugenia Loli-Queru for the Jellyfish video, and Mike McCabe for the Sunset video. The Dolphin and Lily videos were purchased from Artbeats. This research is supported in part by NSERC, CIFAR, CFI, and Ontario MRI.Stills

|

|

|

|

|

|

|

|

|

|